Table of Contents

Special education has always required individualized approaches. Whether through modified assignments, assistive technologies, or one-on-one support, schools have worked to provide equitable access to learning. Yet resources are limited, and implementation is uneven.

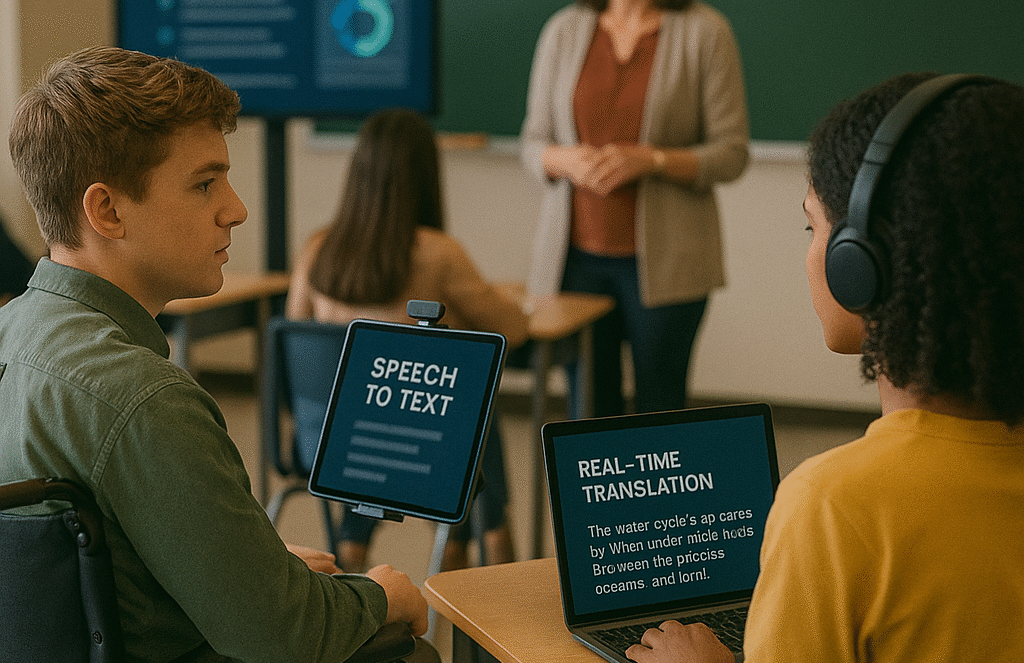

Artificial intelligence introduces a new set of tools that can expand accessibility at scale. AI-driven speech-to-text systems, real-time translation, and adaptive tutoring offer the potential to remove barriers that once excluded students from full participation. At the same time, these technologies raise pressing questions about funding, oversight, and alignment with legal protections such as the Individuals with Disabilities Education Act (IDEA).

How Artificial Intelligence Supports Accessibility

AI applications in special education and accessibility fall into several categories:

-

Text-to-Speech and Speech-to-Text: Converts written materials into spoken words, or spoken responses into text, aiding students with dyslexia, speech impairments, or motor challenges.

-

Predictive Writing and Word Suggestions: Helps students with writing difficulties express complex ideas more fluently.

-

Real-Time Translation: Supports multilingual learners by providing immediate translation of classroom content into multiple languages.

-

Adaptive Tutoring Systems: Personalizes practice and remediation aligned with IEP goals, offering scalable support beyond one-on-one sessions.

-

Classroom Analytics: Tracks engagement and identifies where students with disabilities may be struggling, flagging issues for timely intervention.

These tools expand on earlier assistive technologies, but AI’s adaptive capabilities make them more responsive and less reliant on manual input from teachers.

Opportunities for Schools

The integration of AI into accessibility work creates significant opportunities:

-

Scalability: Supports that once required dedicated human intervention can now be offered to more students simultaneously.

-

Consistency: AI tools deliver standardized supports across classrooms, reducing variability that often occurs when services depend on individual staff availability.

-

Family Engagement: Parent dashboards and progress reports give families clearer insights into their child’s learning journey.

-

Data-Driven Planning: Administrators can identify trends across populations, helping allocate resources strategically.

For districts facing shortages of special education staff, AI can provide meaningful support in bridging service gaps.

Challenges and Risks of using AI

With new opportunities come significant challenges:

1. Funding Inequities

AI-driven accessibility tools often require licenses, devices, and training. Wealthier districts are better positioned to afford them, while underfunded schools risk leaving students without access to transformative supports.

2. Privacy Concerns

Accessibility tools collect sensitive data, including speech patterns, learning difficulties, and behavioral records. This data requires stronger protections than general classroom information. District leaders must ensure compliance not only with FERPA and COPPA but also with state-level privacy laws.

3. Over-Reliance on Technology

While AI can supplement supports, it cannot replace the human relationships central to special education. Students with disabilities often benefit most from personalized, empathetic guidance—something no algorithm can replicate.

4. Implementation Gaps

Without proper training, staff may underuse or misuse AI accessibility tools. Inconsistent application across classrooms risks creating uneven learning experiences.

Sidebar: Administrative Checklist for AI in Accessibility

Before adopting AI tools in special education, districts should confirm:

-

Does the tool align with IDEA requirements and the student’s IEP?

-

How is sensitive data stored, secured, and deleted?

-

What ongoing training will staff receive?

-

How will the district fund equitable access over multiple years?

-

Are families included in decision-making about tool selection and use?

The Legal and Policy Landscape

Special education is already governed by rigorous legal frameworks. Any AI adoption must align with:

-

IDEA (Individuals with Disabilities Education Act): Requires that students with disabilities receive free and appropriate public education with necessary accommodations.

-

Section 504 of the Rehabilitation Act: Prohibits discrimination on the basis of disability.

-

Americans with Disabilities Act (ADA): Ensures accessibility in all areas of public life, including education.

AI tools must be carefully vetted to ensure they enhance, rather than undermine, compliance with these laws. For example, districts cannot substitute an AI tutoring platform for mandated one-on-one human support unless explicitly permitted within an IEP.

Implications for Stakeholders

Administrators: Must prioritize equitable funding models so AI supports are not restricted to wealthier districts. Procurement processes should emphasize accessibility as a core criterion, not an afterthought.

CTOs and Technology Directors: Play a critical role in ensuring tools are compliant with privacy standards. They must also monitor how accessibility data is handled by vendors, with clear provisions in contracts.

Curriculum Directors: Should ensure AI accessibility features align with curricular goals and do not create parallel learning tracks that isolate students with disabilities.

Parents and Families: Need transparency about how AI is being used, how it impacts their child’s learning, and how data is managed. Engagement in decision-making builds trust and ensures compliance with legal rights.

School Boards: Set the tone for ethical adoption by requiring public discussion of accessibility tools, funding commitments, and policy safeguards.

Looking Forward: A Decade of Possibility and Risk

The next 5–10 years may see AI fundamentally reshape accessibility. Future developments could include:

-

Personalized Assistants embedded in devices that adapt in real time to student needs across all subjects.

-

Advanced Voice Recognition that accurately interprets atypical speech patterns, enabling more natural communication.

-

Integrated Learning Ecosystems that connect accessibility data across classrooms, services, and home environments.

Yet without deliberate action, risks loom. Unequal funding could make accessibility gains available only to affluent districts. Over-reliance on automated systems could sideline the personal interactions essential to student growth. And without robust policy frameworks, sensitive data could be mishandled or misused.

Balancing Innovation and Duty of Care

AI tools hold enormous promise for expanding inclusion and supporting students with disabilities and multilingual learners. But their adoption requires deliberate balance. Innovation cannot outpace oversight; efficiency cannot replace empathy; access cannot depend on district wealth.

For administrators, boards, and policymakers, the message is clear: AI accessibility is not simply about technology. It is about fulfilling a duty of care to ensure every student has the tools they need to succeed—safely, equitably, and responsibly.

Subscribe to edCircuit to stay up to date on all of our shows, podcasts, news, and thought leadership articles.